Through the 90s and into the noughties we witnessed the technologically thrilling megahertz war, as computer processors were cranked up from the tens of MHz to have their speeds more meaningfully measured in GHz. In the last few years this ascent has slowed, some might say stalled, and "the 10GHz result is still as unreachable now as it was five years ago," notes the Intel Developer Zone blog (via PC Gamer).

Claimed by Intel to be the first 1GHz PC procesor

What then, is the major obstacle that is in the way of producing, say, 10GHz processors? For a start the Intel blog says heat is a problem but isn't the biggest hurdle that needs to be overcome to unlock the GHz gates.

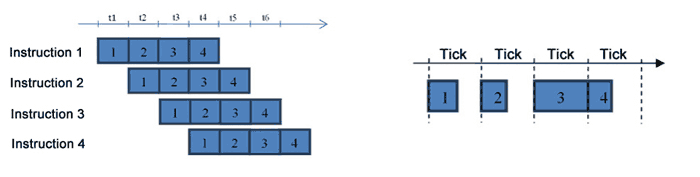

Superscalar architecture 'conveyor level'

Intel's expertise is in its own x86 architecture, which is a so called superscalar architecture. In summary, a superscalar processor can execute more than one instruction during a clock cycle by simultaneously dispatching multiple instructions to different execution units on the processor. However, speed ups in processor frequency are not always worthwhile, as they are limited by the longest clock tick step.

Intel's Victoria Zhislina explains the issue as follows, with help of the diagrams above and below:

"Suppose that the longest step requires 500 ps (picosecond) for execution. This is the clock tick length when the computer frequency is 2GHz. Then, we set a clock tick two times shorter, which would be 250 ps, and everything but the frequency remains the same. Now, what was identified as the longest step is executed during two clock ticks, which together takes 500 ps as well. Nothing is gained by making this change while designing such a change becomes much more complicated and heat emission increases."

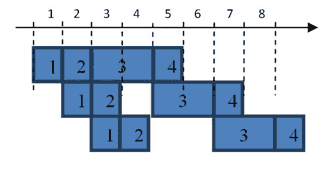

"One could object to this and note that due to shorter clock ticks, the small steps will be executed faster, so the average speed will be greater. However, the following diagram shows that this is not the case."

Simply, after the initial much faster execution steps, all ticks subsequent to step 4 will be delayed. Thus efforts in shortening the longest step are what is being concentrated upon now.

One of the ways to shorten the longest tick is through a more advanced technological process and using smaller process nodes. You can see that clock speeds are gradually increasing, intergenerationally with this method. Another way to cut down the tick time is by dividing processes into smaller steps, but that has to be balanced against the generation of a certain level of additional steps which would then slow down execution times.

In her blog post Zhislina goes on to discusses further ideas on the challenge of processor frequency boosting. If you are interested, you can head on over to the Intel Developer Zone blog and look through the full post.