Nvidia is talking a new approach to training AIs in the context of industrial robots. In a first of its kind deep learning-based system, Nvidia researchers led by Stan Birchfield and Jonathan Tremblay have taught a robot to complete a task simply by observing humans complete the task. Once trained, using Nvidia TitanX GPUs, the robot is smart enough not to need every component used in the task to be put in a specific place, for example.

"For robots to perform useful tasks in real-world settings, it must be easy to communicate the task to the robot; this includes both the desired result and any hints as to the best means to achieve that result," explained the researchers. "With demonstrations, a user can communicate a task to the robot and provide clues as to how to best perform the task."

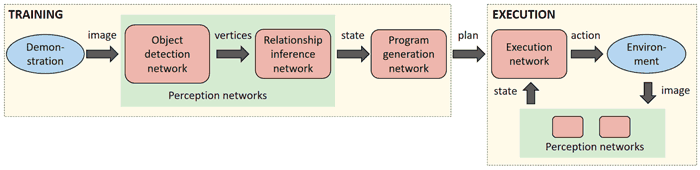

A description of how the method works: A camera acquires a live video feed of a scene, and the positions and relationships of objects in the scene are inferred in real time by a pair of neural networks. The resulting percepts are fed to another network that generates a plan to explain how to recreate those perceptions. Finally, an execution network reads the plan and generates actions for the robot, taking into account the current state of the world to ensure robustness to external disturbances.

Central to the training of the robot AI is a sequence of neural networks that have been taught to "perform duties associated with perception, program generation, and program execution". Thanks to this, when the robot observes a task in the real-world it was ready to go to duplicate that task - even after just one demonstration.

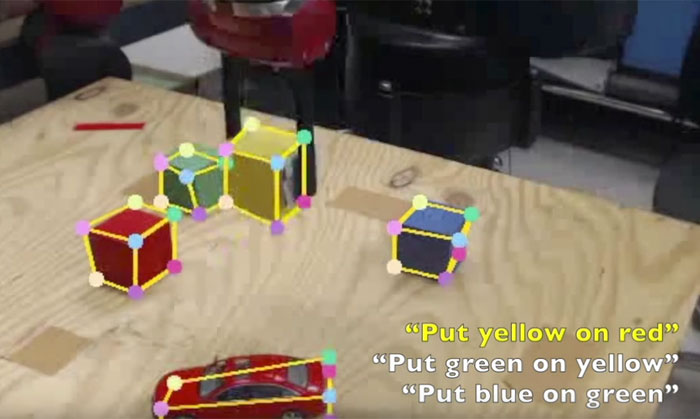

Looking for issues in the robot's perception is easy thanks to the robot AI generating a human-readable description of the steps necessary to re-perform the task, after it has observed the task being done by a human (example transcript above). The description is easily checked and can be edited to correct observation errors or misinterpretations.

If you watch the three minute video demonstration, above, you can see the robot completing a coloured cube stacking task. Even when it makes an error due to its camera depth estimation, it can recover in real-time as it "takes the current state of the world into account during execution".

It is hoped that this new AI technique for robots will be able to not only make training of robots easier but allow them to work safely alongside human co-workers.